-

▪

Manipulation Research:

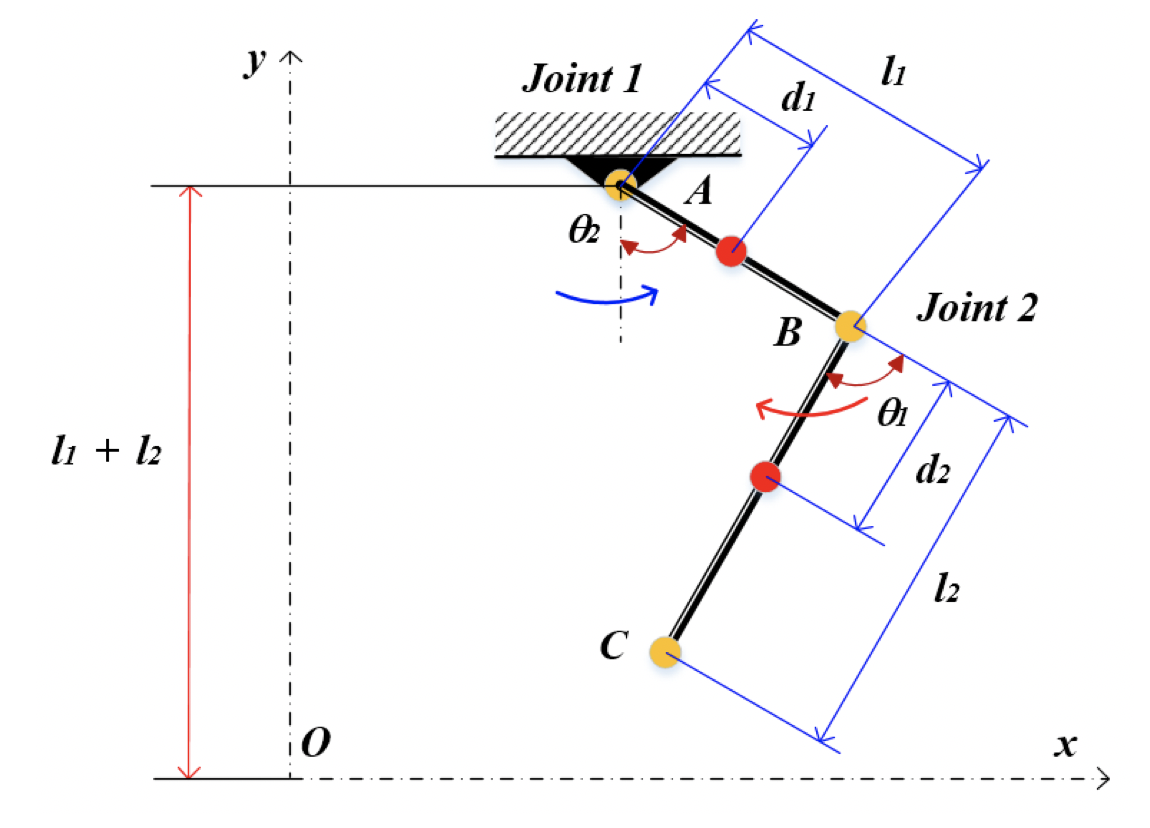

Develop and optimize algorithms for

Imitation Learning (ACT, Diffusion),

advanced motion planning (RRT, trajectory optimization),

inverse kinematics,

and AI-driven perception including SLAM and multimodal sensor fusion.

-

▪

Machine Learning & AI Models:

Build and deploy machine learning models for predictive maintenance,

anomaly detection, object recognition, pose estimation, and task automation.

Utilize modern AI pipelines including deep learning, transformers,

reinforcement learning, and foundation models tailored for robotics applications.

-

▪

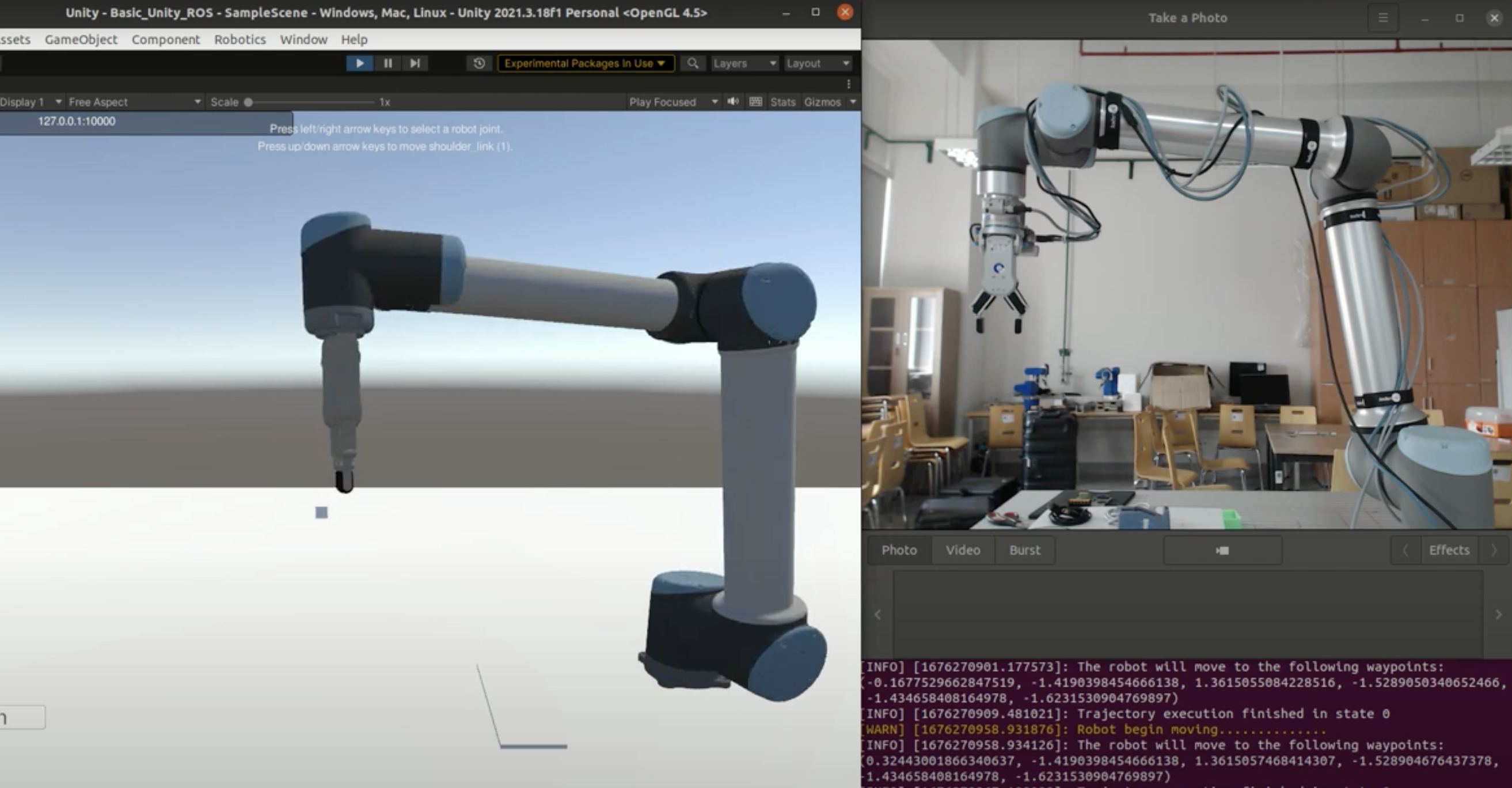

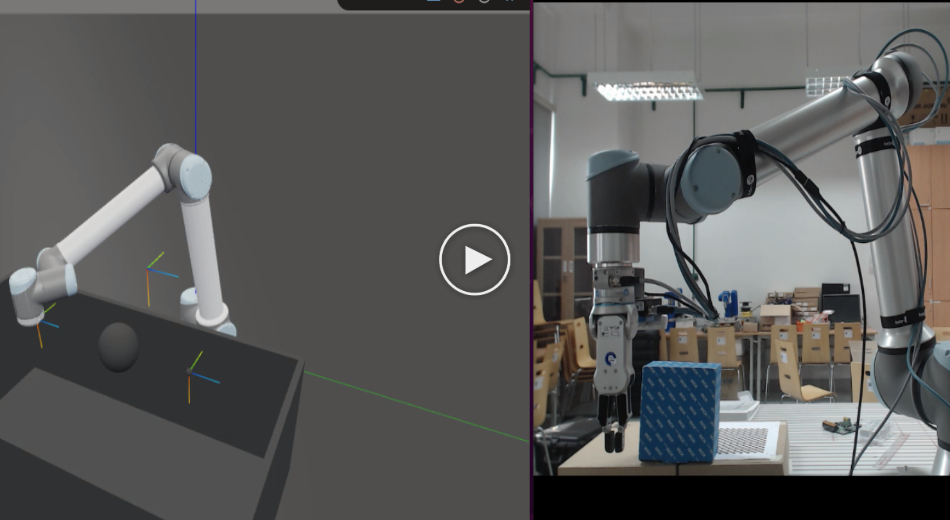

Simulation & Digital Twin:

Configure high-fidelity Digital Twin environments to validate robotic behaviors,

system stability, control robustness, and full mission workflows from simulation to deployment.

-

▪

System Design:

Architect robotics software systems using middleware like

ROS/ROS2,

integrated with simulation tools such as Gazebo, IsaacSim, and high-fidelity Digital Twin pipelines.

-

▪

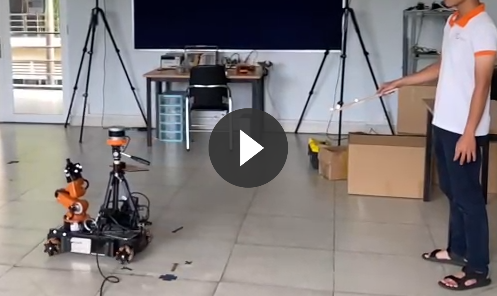

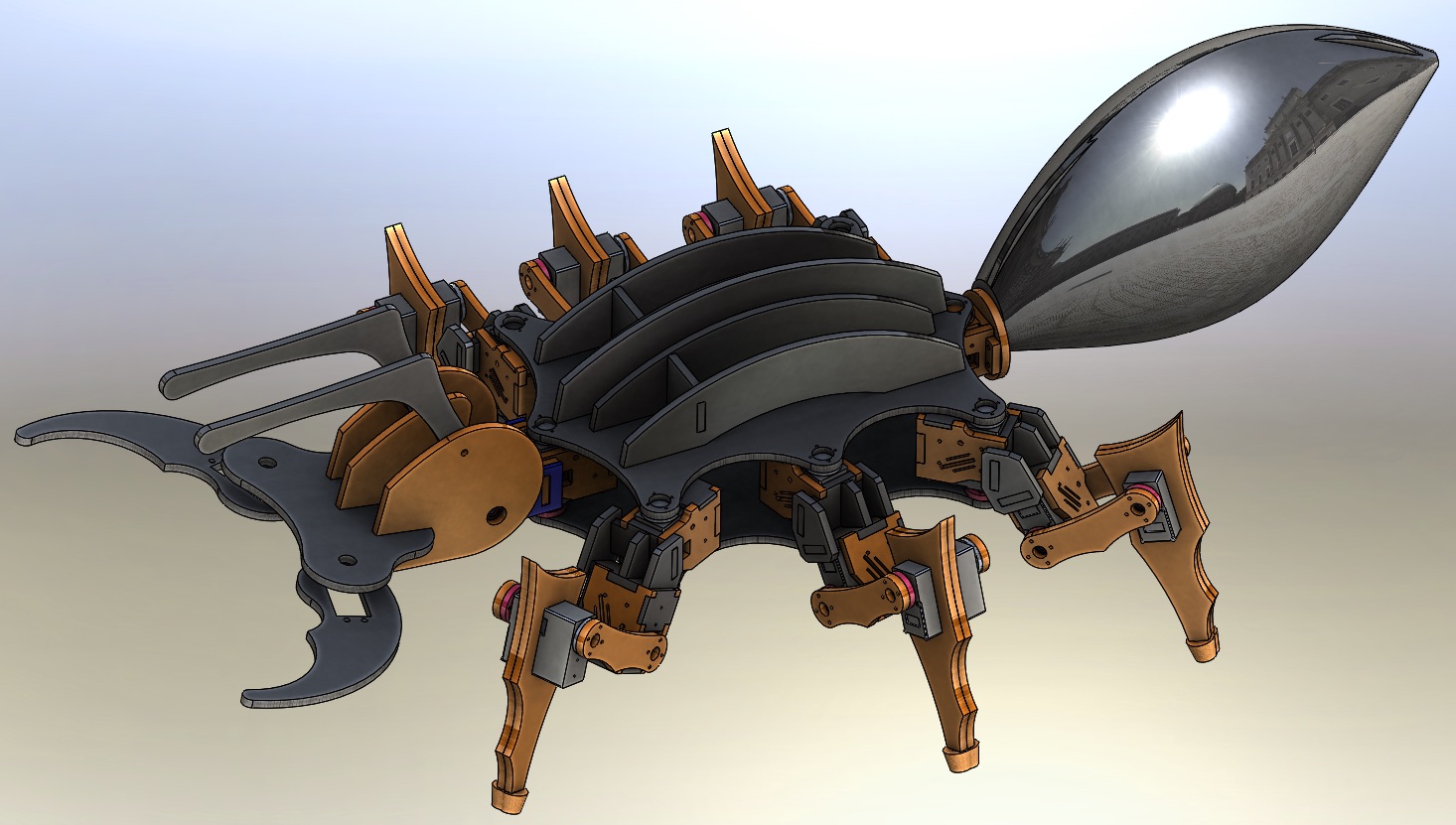

Mobile Robotics:

Develop autonomous navigation stacks, including mapping, localization,

obstacle avoidance, and path planning for AMRs, AGVs, and outdoor UGV platforms.

Integrate sensor suites such as LiDAR, GPS, IMU, and stereo/depth cameras

to achieve robust autonomy in dynamic industrial environments.

-

▪

Integration:

Interface specialized hardware with industrial robots

UR and key components

(LiDAR, depth cameras, actuators, embedded systems)

via middleware such as ROS/ROS 2 and real-time communication bridges.

Technical Capabilities

Programming & Frameworks

Core development in low-level and high-level languages for robotics and AI.

Lab Tutorials & Curriculum

- Robotics and Autonomous Systems (ROS, Pytorch)

- Embedded Intelligent System (ROS, OpenCV)

- Microcontroller / Digital Signal Processing

- Robotics Workshop (CAD and PCB Design)

Hands-on Hardware Experience

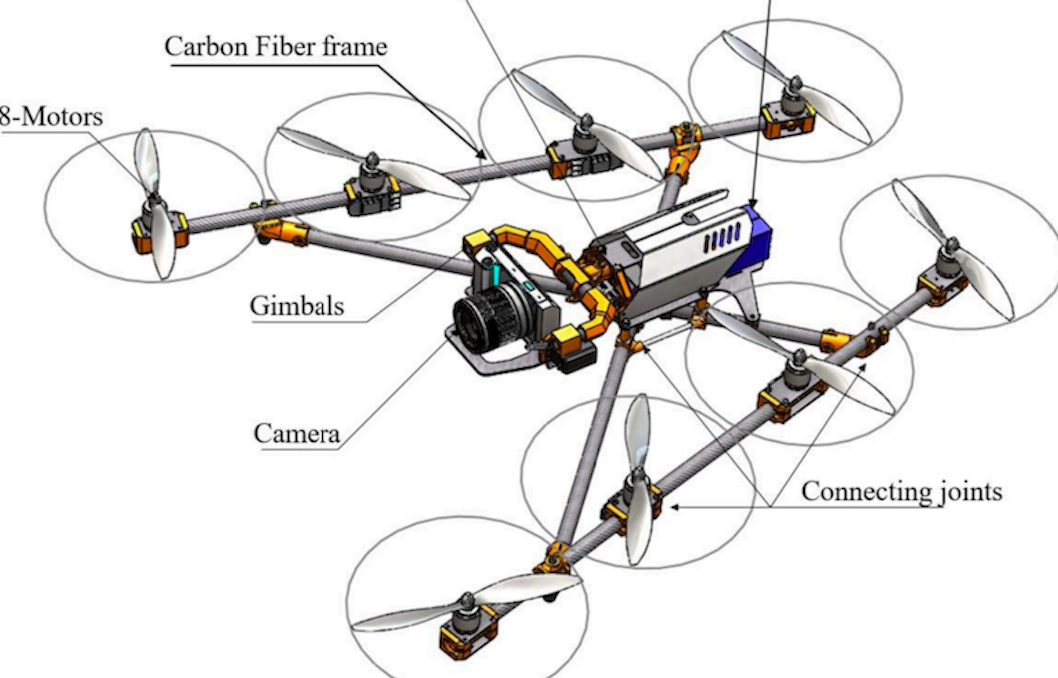

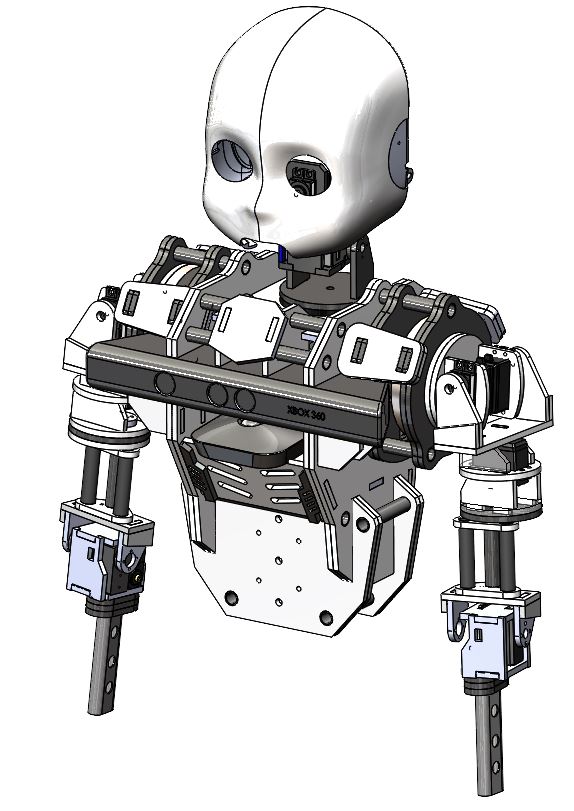

- Robot Platforms: UR10e, Kuka Youbot, Turtlebot 3, NAO, DJI Drone.

- Sensors: Velodyne VLP-16, IMU-Xsens Mti-30, Intel Realsense, SICK Lidar.

- Embedded Computers: Nvidia Jetson family, Raspberry Pi, NUC, Arduino.

- Actuators: Servo motors, linear actuators, motor drivers.

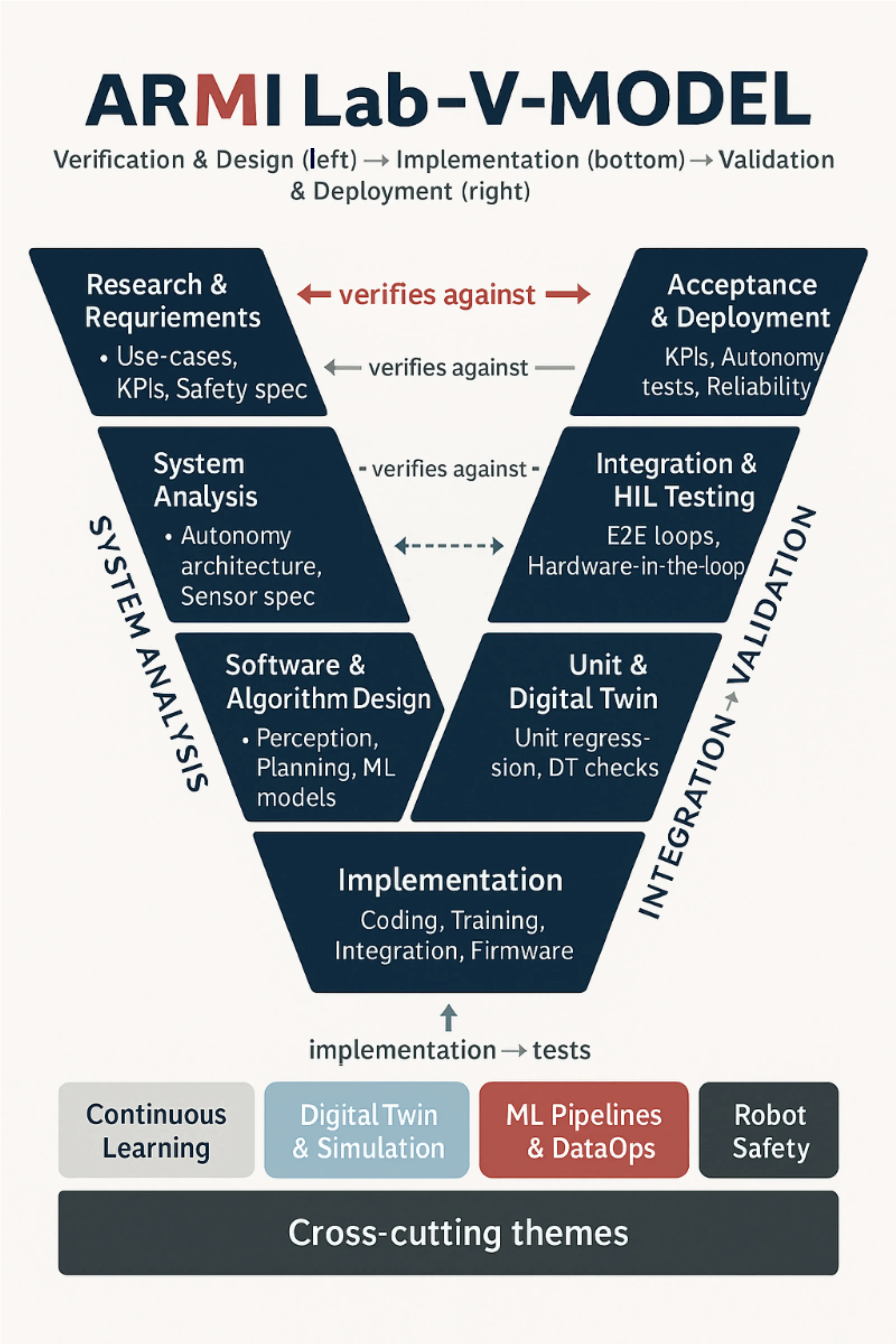

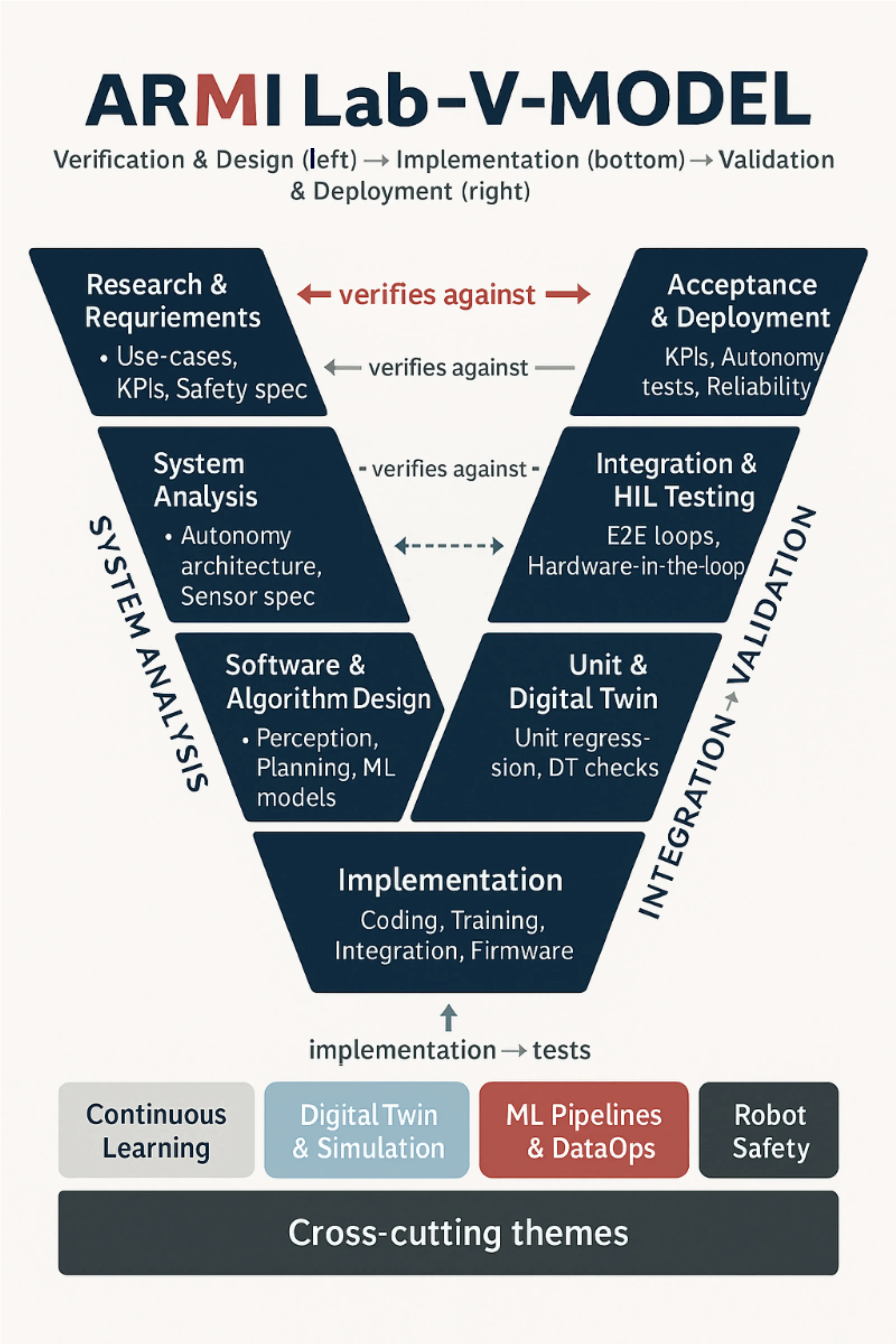

ARMI V-Research Model

The ARMI V-Research Model is a modern adaptation of the classical V-Model,

redesigned for autonomous robotics and machine intelligence workflows.

It aligns verification (left side) with

validation (right side), ensuring that

every research activity—from requirements, system design, perception algorithms, ML model pipelines,

to field testing—is tested against measurable criteria.

This model guides ARMI Lab in developing safe, reliable, and high-performance robotic systems using

digital twins, simulation-driven development, ML lifecycle management, and continuous

sim-to-real improvement.

The model emphasizes four key principles:

-

Simulation-first development using digital twins

(Unity, Isaac Sim, Gazebo) for safe testing, rapid iteration, and synthetic data generation.

-

Integrated ML lifecycle driving perception,

planning, and decision-making modules through continuous training, monitoring, and model validation.

-

Hardware-in-the-loop (HIL) and

system-in-the-loop validation to ensure robust

sim-to-real transfer and safety-critical performance.

-

Full verification & validation alignment so that

research requirements, algorithms, and system behaviors always map to measurable real-world tests,

acceptance KPIs, and field trials.

This V-Research framework ensures that ARMI Lab produces scalable robotic technologies

with strong scientific rigor, repeatability, and industrial-grade robustness — from

early concept development to deployment in real autonomous robots.